Recording environments

Objectives

Understand what containers are and what they are useful for

Discuss container definitions files in the context of reusability and reproducibility

Instructor note

10 min teaching/discussion

10 min demo

What is a container?

Imagine if you didn’t have to install things yourself, but instead you could get a computer with the exact software for a task pre-installed. Containers effectively do that, with various advantages and disadvantages. They are like an entire operating system with software installed, all in one file.

Kitchen analogy

Our codes/scripts <-> cooking recipes

Container definition files <-> like a blueprint to build a kitchen with all utensils in which the recipe can be prepared.

Container images <-> showroom kitchens

Containers <-> a real connected kitchen

Just for fun: which operating systems do the following example kitchens represent?

From definition files to container images to containers

Containers can be built to bundle all the necessary ingredients (data, code, environment, operating system).

A container image is like a piece of paper with all the operating system on it. When you run it, a transparent sheet is placed on top to form a container. The container runs and writes only on that transparent sheet (and what other mounts have been layered on top). When you are done, the transparent sheet is thrown away. This can be repeated as often as you want, and base is always the same.

Definition files (e.g., Dockerfile or Singularity definition file) are text files that contain a series of instructions to build container images.

You may have use for containers in different ways

Installing a certain software is tricky, or not supported for your operating system? - Check if an image is available and run the software from a container instead!

You want to make sure your colleagues are using the same environment for running your code? - Provide them an image of your container!

If this does not work, because they are using a different architecture than you do? - Provide a definition file for them to build the image suitable for their computers. This does not create the exact environment you have, but in most cases a similar enough one.

The container recipe

Here is an example of a Singularity definition file (reference):

Bootstrap: docker

From: ubuntu:24.04

%post

apt-get -y update

apt-get -y install fortune cowsay lolcat

%environment

export LC_ALL=C

export PATH=/usr/games:$PATH

%runscript

fortune | cowsay | lolcat

Popular container implementations:

Singularity (popular on high-performance computing systems)

Apptainer (popular on high-performance computing systems, fork of Singularity)

They are to some extent interoperable:

podman is very close to Docker

Docker images can be converted to Singularity/Apptainer images

Singularity Python can convert Dockerfiles to Singularity definition files

Pros and cons of containers

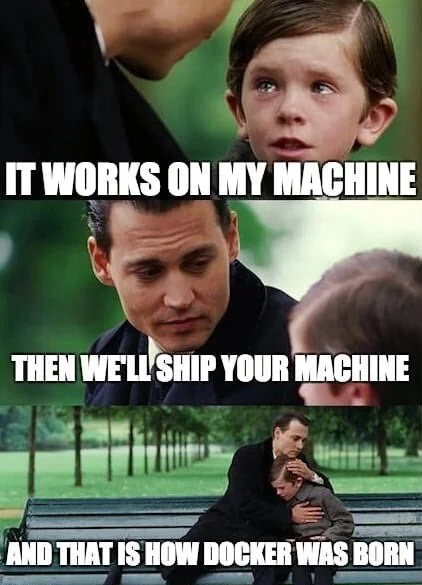

Containers are popular for a reason - they solve a number of important problems:

Allow for seamlessly moving workflows across different platforms.

Can solve the “works on my machine” situation.

For software with many dependencies, in turn with its own dependencies, containers offer possibly the only way to preserve the computational experiment for future reproducibility.

A mechanism to “send the computer to the data” when the dataset is too large to transfer.

Installing software into a file instead of into your computer (removing a file is often easier than uninstalling software if you suddenly regret an installation).

However, containers may also have some drawbacks:

Can be used to hide away software installation problems and thereby discourage good software development practices.

Instead of “works on my machine” problem: “works only in this container” problem?

They can be difficult to modify.

Container images can become large.

Danger

Use only official and trusted images! Not all images can be trusted! There have been examples of contaminated images, so investigate before using images blindly. Apply the same caution as when installing software packages from untrusted package repositories.

Exercises

Containers-1: Time travel

Scenario: A researcher has written and published their research code which requires a number of libraries and system dependencies. They ran their code on a Linux computer (Ubuntu). One very nice thing they did was to publish also a container image with all dependencies included, as well as the definition file (below) to create the container image.

Now we travel 3 years into the future and want to reuse their work and adapt it for our data. The container registry where they uploaded the container image however no longer exists. But luckily we still have the definition file (below)! From this we should be able to create a new container image.

Can you anticipate problems using the definitions file 3 years after its creation? Which possible problems can you point out?

Discuss possible take-aways for creating more reusable containers.

1Bootstrap: docker

2From: ubuntu:latest

3

4%post

5 # Set environment variables

6 export VIRTUAL_ENV=/app/venv

7

8 # Install system dependencies and Python 3

9 apt-get update && \

10 apt-get install -y --no-install-recommends \

11 gcc \

12 libgomp1 \

13 python3 \

14 python3-venv \

15 python3-distutils \

16 python3-pip && \

17 apt-get clean && \

18 rm -rf /var/lib/apt/lists/*

19

20 # Set up the virtual environment

21 python3 -m venv $VIRTUAL_ENV

22 . $VIRTUAL_ENV/bin/activate

23

24 # Install Python libraries

25 pip install --no-cache-dir --upgrade pip && \

26 pip install --no-cache-dir -r /app/requirements.txt

27

28%files

29 # Copy project files

30 ./requirements.txt /app/requirements.txt

31 ./app.py /app/app.py

32 # Copy data

33 /home/myself/data /app/data

34 # Workaround to fix dependency on fancylib

35 /home/myself/fancylib /usr/lib/fancylib

36

37%environment

38 # Set the environment variables

39 export LANG=C.UTF-8 LC_ALL=C.UTF-8

40 export VIRTUAL_ENV=/app/venv

41

42%runscript

43 # Activate the virtual environment

44 . $VIRTUAL_ENV/bin/activate

45 # Run the application

46 python /app/app.py

Solution

Line 2: “ubuntu:latest” will mean something different 3 years into the future.

Lines 11-12: The compiler gcc and the library libgomp1 will have evolved.

Line 30: The container uses requirements.txt to build the virtual environment but we don’t see here what libraries the code depends on.

Line 33: Data is copied in from the hard disk of the person who created it. Hopefully we can find the data somewhere.

Line 35: The library fancylib has been built outside the container and copied in but we don’t see here how it was done.

The Python version will be different and hopefully the code still runs.

Singularity/Apptainer will have also evolved by then. Hopefully this definition file still works.

No contact address to ask more questions about this file.

(Can you find more? Please contribute more points.)

Work in progress: Please contribute a corresponding example which demonstrates this in the context of R and renv.

(optional) Containers-2: Installing the impossible.

When you are missing privileges for installing certain software tools, containers can come handy.

Here we build a Singularity/Apptainer container for installing the cowsay and lolcat Linux programs.

Make sure you have apptainer installed:

$ apptainer --version

Make sure you set the apptainer cache and temporary folders.

$ mkdir ./cache/ $ mkdir ./temp/ $ export APPTAINER_CACHEDIR="./cache/" $ export APPTAINER_TMPDIR="./temp/"

Build the container from the container recipe file introduced above.

apptainer build cowsay.sif cowsay.defLet’s test the container by entering into it with a shell terminal:

$ apptainer shell cowsay.sif

We can verify the installation.

$ cowsay "Hello world!"|lolcat

(optional) Containers-3: Explore two really useful Docker images

You can try the below if you have Docker installed. If you have Singularity/Apptainer and not Docker, the goal of the exercise can be to run the Docker containers through Singularity/Apptainer.

Run a specific version of Rstudio:

$ docker run --rm -p 8787:8787 -e PASSWORD=yourpasswordhere rocker/rstudio

Then open your browser to http://localhost:8787 with login rstudio and password “yourpasswordhere” used in the previous command.

If you want to try an older version you can check the tags at https://hub.docker.com/r/rocker/rstudio/tags and run for example:

$ docker run --rm -p 8787:8787 -e PASSWORD=yourpasswordhere rocker/rstudio:3.3

Run a specific version of Anaconda3 from https://hub.docker.com/r/continuumio/anaconda3:

$ docker run -i -t continuumio/anaconda3 /bin/bash

Resources for further learning

Keypoints

Containers can be helpful if complex setups are needed to run a specific software.

They can also be helpful for prototyping without “messing up” your own computing environment, or for running software that requires a different operating system than your own.